These smart assistants, such as Siri or Alexa, use voice recognition to understand our everyday queries, they then use natural language generation (a subfield of NLP) to answer these queries. Through NLP, computers don’t just understand meaning, they also understand sentiment and intent. They then learn on the job, storing information and context to strengthen their future responses. In this piece, we’ll go into more depth on what NLP is, take you through a number of natural language processing examples, and show you how you can apply these within your business. A whole new world of unstructured data is now open for you to explore.

Notice that the term frequency values are the same for all of the sentences since none of the words in any sentences repeat in the same sentence. Next, we are going to use IDF values to get the closest answer to the query. Notice that the word dog or doggo can appear in many many documents. However, if we check the word “cute” in the dog descriptions, then it will come up relatively fewer times, so it increases the TF-IDF value. So the word “cute” has more discriminative power than “dog” or “doggo.” Then, our search engine will find the descriptions that have the word “cute” in it, and in the end, that is what the user was looking for.

Chatbots

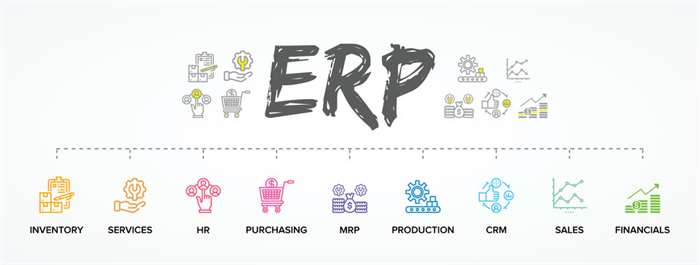

Companies nowadays have to process a lot of data and unstructured text. Organizing and analyzing this data manually is inefficient, subjective, and often impossible due to the volume. When you send out surveys, be it to customers, employees, or any other group, you need to be able to draw actionable insights from the data you get back. Customer service costs businesses a great deal in both time and money, especially during growth periods.

- Now that you’ve done some text processing tasks with small example texts, you’re ready to analyze a bunch of texts at once.

- In summary, a bag of words is a collection of words that represent a sentence along with the word count where the order of occurrences is not relevant.

- Kea aims to alleviate your impatience by helping quick-service restaurants retain revenue that’s typically lost when the phone rings while on-site patrons are tended to.

- Elicit is designed for a growing number of specific tasks relevant to research, like summarization, data labeling, rephrasing, brainstorming, and literature reviews.

- Top word cloud generation tools can transform your insight visualizations with their creativity, and give them an edge.

Search engines have been part of our lives for a relatively long time. However, traditionally, they’ve not been particularly useful for determining the context of what and how people search. You may have seen predictive natural language processing examples text pop up in an email you’re drafting on Gmail, or even in a text you’re crafting. Autocorrect is another example of text prediction that marks or changes misspellings or grammatical mistakes in Word documents.

Customer Service Automation

One example is smarter visual encodings, offering up the best visualization for the right task based on the semantics of the data. This opens up more opportunities for people to explore their data using natural language statements or question fragments made up of several keywords that can be interpreted and assigned a meaning. Applying language to investigate data not only enhances the level of accessibility, but lowers the barrier to analytics across organizations, beyond the expected community of analysts and software developers. To learn more about how natural language can help you better visualize and explore your data, check out this webinar.

Now if you have understood how to generate a consecutive word of a sentence, you can similarly generate the required number of words by a loop. You can pass the string to .encode() which will converts a string in a sequence of ids, using the tokenizer and vocabulary. The transformers provides task-specific pipeline for our needs. Language Translator can be built in a few steps using Hugging face’s transformers library. I am sure each of us would have used a translator in our life !

Deeper Insights

We, as humans, perform natural language processing (NLP) considerably well, but even then, we are not perfect. We often misunderstand one thing for another, and we often interpret the same sentences or words differently. Large foundation models like GPT-3 exhibit abilities to generalize to a large number of tasks without any task-specific training.

This was so prevalent that many questioned if it would ever be possible to accurately translate text. Microsoft ran nearly 20 of the Bard’s plays through its Text Analytics API. The application charted emotional extremities in lines of dialogue throughout the tragedy and comedy datasets. Unfortunately, the machine reader sometimes had trouble deciphering comic from tragic. There’s also some evidence that so-called “recommender systems,” which are often assisted by NLP technology, may exacerbate the digital siloing effect. Named entity recognition (NER) concentrates on determining which items in a text (i.e. the “named entities”) can be located and classified into predefined categories.

Connect with your customers and boost your bottom line with actionable insights.

It’s your first step in turning unstructured data into structured data, which is easier to analyze. These are some of the basics for the exciting field of natural language processing (NLP). We hope you enjoyed reading this article and learned something new. Any suggestions or feedback is crucial to continue to improve.

Text Processing involves preparing the text corpus to make it more usable for NLP tasks. It was developed by HuggingFace and provides state of the art models. It is an advanced library known for the transformer modules, it is currently under active development. Another common use of NLP is for text prediction and autocorrect, which you’ve likely encountered many times before while messaging a friend or drafting a document.

History of NLP

Before working with an example, we need to know what phrases are? If accuracy is not the project’s final goal, then stemming is an appropriate approach. If higher accuracy is crucial and the project is not on a tight deadline, then the best option is amortization (Lemmatization has a lower processing speed, compared to stemming). In the code snippet below, many of the words after stemming did not end up being a recognizable dictionary word. As shown above, all the punctuation marks from our text are excluded.

Deep learning techniques such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) have been applied to tasks such as sentiment analysis and machine translation, achieving state-of-the-art results. Natural Language Processing (NLP) is a subfield of artificial intelligence that deals with the interaction between computers and humans in natural language. It involves the use of computational techniques to process and analyze natural language data, such as text and speech, with the goal of understanding the meaning behind the language.

Future applications of natural language processing

Next, we can see the entire text of our data is represented as words and also notice that the total number of words here is 144. By tokenizing the text with word_tokenize( ), we can get the text as words. The NLTK Python framework is generally used as an education and research tool. However, it can be used to build exciting programs due to its ease of use. If you want to learn more about how and why conversational interfaces have developed, check out our introductory course.